Because We Can’t Even Agree On What It Is

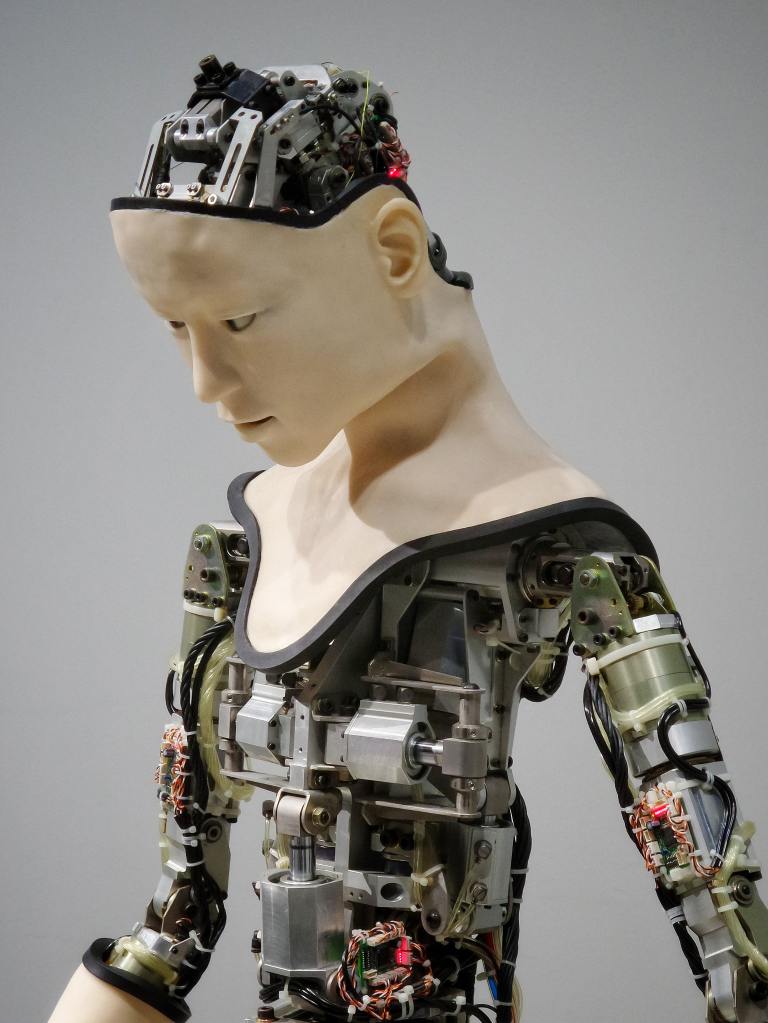

Photo courtesy of Possessed Photogrpahy.

There is such a swirl of discussion around Artificial Intelligence and whether it will supplant human work. This largely stems from a real misunderstanding of what AI is and what it can do. According to IBM, Artificial Intelligence is: “the science and engineering of making intelligent machines, especially intelligent computer programs. It is related to the similar task of using computers to understand human intelligence, but AI does not have to confine itself to methods that are biologically observable.”

There’s a lot packed into that definition. No wonder people don’t know quite what to make of it!

It seems to me that the biggest challenge is that people mistake Intelligence for Sentience. While humans certainly possess both, it’s not even a realistic goal in some ways to presume that we can master Artificial Sentience.

What do I mean by this?

In a nutshell, “sentience is the ability to feel, sense or experience perceptions subjectively. Those working in the field believe that capability to be decades away, if achievable at all. Building self-awareness into a machine is an unlikely undertaking, anyway. To reach that state, researchers would have to build programs that achieve generalized intelligence, a single learning machine with the ability to problem solve, recognize environments and changing requirements, and the capacity to learn more. Once that capacity is established, the next step is just reaching the edges of the potential ability to learn consciousness.

Therein lies the rub. A machine is unlikely to “learn” how to “feel,” since the uniquely human capability of complex emotions is perhaps the ultimately most difficult thing to teach. Just as a machine has no conception of physical pain or healing, the ability to feel attachment or loss is not necessarily a programmable skill.

No one in the field claims that a Roomba has any particular attachment to the floors it vacuums, despite the fact that it does indeed learn what the obstacles to successful vacuuming are. No one believes that a robot vacuum enjoys the sensation of a 120-volt charge running through its wires, even if we imagine those wires to function much like veins.

Robots still can’t feel.

Robots can mimic, absolutely. They are fantastic at aping what humans do, if they are taught to do so. Within series after series of If/Then statements, a computer can perform all sorts of functions. My robot vacuum “understands” that If there is a chair leg in the way, it should rotate 30 degrees and try again. If the block is still there, it will rotate again an again until it is able to move past the obstacle. With the most recent robot vacuums (to stick with this example), the vacuum “knows” that the chair is there and “learns” to avoid it, unless it arrives at that obstacle and finds it has been moved. That said, the vacuum is not frustrated by the obstacle, nor is it relieved when the chair is moved to another room.

I think of it often as similar to what I learned in my first college linguistics course: animals can communicate, but they do not have language. That is, my dog can let me know when he is happy, but he cannot tell me that his father was poor but honest. Machines can learn, but the challenge is that they cannot emote. They can mirror emotion, sure, but to have original and unique feelings about their situations? No. At least not yet, and not for the conceivable immediate future.

But that does not mean that machines cannot learn. They very much can. Just as a third grader can sit quietly, ingesting information and retaining it for future reference, a machine can do the same. A student learning multiplication tables soon learns that 5 x anything can only result in a number ending in 5 or 0. A machine has the capacity to learn and retain that information and apply it to millions of situations, millions of times faster than the human mind can do. A machine can aggregate vocabularies, numerical sets, geographic data, and more – all at a rate far more effectively and efficiently than the human brain.

That’s why machine learning and artificial intelligence are thrilling and fantastic.

But until that computer program can harness things like anticipation (that the car in the next lane is looking like it will merge into yours), or fear (that the spider crawling up the side has the potential to cause damage), and responds accordingly, our jobs are all safe. Machines can certainly learn to edge over if a car is too close or to restart if an interloper is nearby, but it does not have the capacity for self-awareness.

We mustn’t confuse intelligence with sentience, and if we do that, we’ll soon welcome the advancements of AI and machine learning, harnessing them for what they are.

But remember, too, that until the machine can understand notions like initiative, relaxation, and joy, it remains a tool for us to use. And remember, too, that it may well be the mindless robots may be our biggest threat, not the ones that could one day feel and thus bring us things like helpfulness, empathy, and emotional support.

Susan is a technical content strategist and researcher of all things automated. She lives in Baltimore with her two dogs and snuggles with them when she’s not on her bike or swimming laps in the pool. She is an avid traveler and reader of nonfiction. Subscribe to this blog to learn more about technical writing, communicating, and machine learning within those domains.